#include <DepthwiseConv2DLayer.h>

Public Member Functions | |

| DepthwiseConvolutionLayer ()=default | |

| void | convFloat32 () |

| void | convQ8uPerTensor () |

| void | convQ8uPerChannel () |

| void | convQ8i () |

| void | convQ8iHybridPerChannel () |

| void | configure (const IPortableTensor *input, const IPortableTensor *kernel, const IPortableTensor *bias, const uint32_t paddingLeft, const uint32_t paddingRight, const uint32_t paddingTop, const uint32_t paddingBottom, const uint32_t strideW, const uint32_t strideH, const uint32_t multiplier, const uint32_t dilationWidth, const uint32_t dilationHeight, const ir::Activation activation, IPortableTensor *output, const std::shared_ptr< ExternalContext > &external_context) |

| void | run () override |

Public Member Functions inherited from onert::exec::IFunction Public Member Functions inherited from onert::exec::IFunction | |

| virtual | ~IFunction ()=default |

| virtual void | prepare () |

Protected Attributes | |

| const IPortableTensor * | _input {nullptr} |

| const IPortableTensor * | _kernel {nullptr} |

| const IPortableTensor * | _bias {nullptr} |

| IPortableTensor * | _output {nullptr} |

| uint32_t | _paddingLeft {0} |

| uint32_t | _paddingTop {0} |

| uint32_t | _paddingRight {0} |

| uint32_t | _paddingBottom {0} |

| uint32_t | _strideWidth {0} |

| uint32_t | _strideHeight {0} |

| uint32_t | _multiplier {0} |

| uint32_t | _dilationWidth {1} |

| uint32_t | _dilationHeight {1} |

| ir::Activation | _activation {ir::Activation::NONE} |

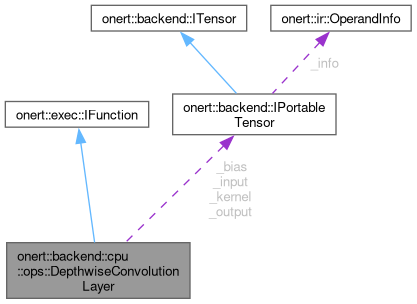

Detailed Description

Definition at line 29 of file DepthwiseConv2DLayer.h.

Constructor & Destructor Documentation

◆ DepthwiseConvolutionLayer()

|

default |

Member Function Documentation

◆ configure()

| void onert::backend::cpu::ops::DepthwiseConvolutionLayer::configure | ( | const IPortableTensor * | input, |

| const IPortableTensor * | kernel, | ||

| const IPortableTensor * | bias, | ||

| const uint32_t | paddingLeft, | ||

| const uint32_t | paddingRight, | ||

| const uint32_t | paddingTop, | ||

| const uint32_t | paddingBottom, | ||

| const uint32_t | strideW, | ||

| const uint32_t | strideH, | ||

| const uint32_t | multiplier, | ||

| const uint32_t | dilationWidth, | ||

| const uint32_t | dilationHeight, | ||

| const ir::Activation | activation, | ||

| IPortableTensor * | output, | ||

| const std::shared_ptr< ExternalContext > & | external_context | ||

| ) |

Definition at line 291 of file DepthwiseConv2DLayer.cc.

References _activation, _bias, _dilationHeight, _dilationWidth, _input, _kernel, _multiplier, _output, _paddingBottom, _paddingLeft, _paddingRight, _paddingTop, _strideHeight, _strideWidth, onert::backend::IPortableTensor::data_scales(), onert::backend::IPortableTensor::data_type(), onert::backend::IPortableTensor::is_constant(), and onert::backend::IPortableTensor::is_dynamic().

◆ convFloat32()

| void onert::backend::cpu::ops::DepthwiseConvolutionLayer::convFloat32 | ( | ) |

Definition at line 72 of file DepthwiseConv2DLayer.cc.

References _activation, _bias, _dilationHeight, _dilationWidth, _input, _kernel, _multiplier, _output, _paddingLeft, _paddingTop, _strideHeight, _strideWidth, onert::util::CalculateActivationRange(), nnfw::cker::DepthwiseConvParams::depth_multiplier, nnfw::cker::DepthwiseConvParams::dilation_height_factor, nnfw::cker::DepthwiseConvParams::dilation_width_factor, nnfw::cker::DepthwiseConvParams::float_activation_max, nnfw::cker::DepthwiseConvParams::float_activation_min, onert::backend::cpu::ops::getShape(), nnfw::cker::PaddingValues::height, nnfw::cker::DepthwiseConvParams::padding_values, nnfw::cker::DepthwiseConvParams::stride_height, nnfw::cker::DepthwiseConvParams::stride_width, and nnfw::cker::PaddingValues::width.

Referenced by run().

◆ convQ8i()

| void onert::backend::cpu::ops::DepthwiseConvolutionLayer::convQ8i | ( | ) |

Definition at line 171 of file DepthwiseConv2DLayer.cc.

References _activation, _bias, _dilationHeight, _dilationWidth, _input, _kernel, _multiplier, _output, _paddingLeft, _paddingTop, _strideHeight, _strideWidth, onert::backend::cpu::ops::CalculateActivationRangeQuantized(), onert::backend::IPortableTensor::data_zero_point(), nnfw::cker::DepthwiseConvParams::depth_multiplier, nnfw::cker::optimized_integer_ops::DepthwiseConvPerChannel(), nnfw::cker::DepthwiseConvParams::dilation_height_factor, nnfw::cker::DepthwiseConvParams::dilation_width_factor, onert::backend::cpu::ops::getShape(), nnfw::cker::PaddingValues::height, nnfw::cker::DepthwiseConvParams::input_offset, nnfw::cker::kSame, nnfw::cker::DepthwiseConvParams::output_offset, nnfw::cker::DepthwiseConvParams::padding_type, nnfw::cker::DepthwiseConvParams::padding_values, nnfw::cker::DepthwiseConvParams::quantized_activation_max, nnfw::cker::DepthwiseConvParams::quantized_activation_min, nnfw::cker::DepthwiseConvParams::stride_height, nnfw::cker::DepthwiseConvParams::stride_width, nnfw::cker::DepthwiseConvParams::weights_offset, and nnfw::cker::PaddingValues::width.

Referenced by run().

◆ convQ8iHybridPerChannel()

| void onert::backend::cpu::ops::DepthwiseConvolutionLayer::convQ8iHybridPerChannel | ( | ) |

Definition at line 206 of file DepthwiseConv2DLayer.cc.

References _activation, _bias, _dilationHeight, _dilationWidth, _input, _kernel, _multiplier, _output, _paddingLeft, _paddingTop, _strideHeight, _strideWidth, onert::util::CalculateActivationRange(), onert::backend::IPortableTensor::data_scales(), nnfw::cker::DepthwiseConvParams::depth_multiplier, nnfw::cker::reference_integer_ops::DepthwiseConvHybridPerChannel(), nnfw::cker::DepthwiseConvParams::dilation_height_factor, nnfw::cker::DepthwiseConvParams::dilation_width_factor, nnfw::cker::DepthwiseConvParams::float_activation_max, nnfw::cker::DepthwiseConvParams::float_activation_min, onert::backend::cpu::ops::getShape(), nnfw::cker::PaddingValues::height, offset(), nnfw::cker::DepthwiseConvParams::padding_values, nnfw::cker::PortableAsymmetricQuantizeFloats(), nnfw::cker::DepthwiseConvParams::stride_height, nnfw::cker::DepthwiseConvParams::stride_width, and nnfw::cker::PaddingValues::width.

Referenced by run().

◆ convQ8uPerChannel()

| void onert::backend::cpu::ops::DepthwiseConvolutionLayer::convQ8uPerChannel | ( | ) |

Definition at line 143 of file DepthwiseConv2DLayer.cc.

References _activation, _bias, _dilationHeight, _dilationWidth, _input, _kernel, _multiplier, _output, _paddingLeft, _paddingTop, _strideHeight, _strideWidth, onert::backend::cpu::ops::CalculateActivationRangeQuantized(), onert::backend::IPortableTensor::data_zero_point(), onert::backend::IPortableTensor::data_zero_points(), nnfw::cker::DepthwiseConvParams::depth_multiplier, nnfw::cker::reference_integer_ops::DepthwiseConvPerChannel(), nnfw::cker::DepthwiseConvParams::dilation_height_factor, nnfw::cker::DepthwiseConvParams::dilation_width_factor, onert::backend::cpu::ops::getShape(), nnfw::cker::PaddingValues::height, nnfw::cker::DepthwiseConvParams::input_offset, nnfw::cker::DepthwiseConvParams::output_offset, nnfw::cker::DepthwiseConvParams::padding_values, nnfw::cker::DepthwiseConvParams::quantized_activation_max, nnfw::cker::DepthwiseConvParams::quantized_activation_min, nnfw::cker::DepthwiseConvParams::stride_height, nnfw::cker::DepthwiseConvParams::stride_width, and nnfw::cker::PaddingValues::width.

Referenced by run().

◆ convQ8uPerTensor()

| void onert::backend::cpu::ops::DepthwiseConvolutionLayer::convQ8uPerTensor | ( | ) |

Definition at line 108 of file DepthwiseConv2DLayer.cc.

References _activation, _bias, _dilationHeight, _dilationWidth, _input, _kernel, _multiplier, _output, _paddingLeft, _paddingTop, _strideHeight, _strideWidth, onert::backend::cpu::ops::CalculateActivationRangeQuantized(), onert::backend::IPortableTensor::data_zero_point(), nnfw::cker::DepthwiseConvParams::depth_multiplier, nnfw::cker::DepthwiseConvParams::dilation_height_factor, nnfw::cker::DepthwiseConvParams::dilation_width_factor, onert::backend::cpu::ops::GetQuantizedConvolutionMultiplier(), onert::backend::cpu::ops::getShape(), nnfw::cker::PaddingValues::height, nnfw::cker::DepthwiseConvParams::input_offset, nnfw::cker::DepthwiseConvParams::output_multiplier, nnfw::cker::DepthwiseConvParams::output_offset, nnfw::cker::DepthwiseConvParams::output_shift, nnfw::cker::DepthwiseConvParams::padding_values, nnfw::cker::DepthwiseConvParams::quantized_activation_max, nnfw::cker::DepthwiseConvParams::quantized_activation_min, onert::backend::cpu::ops::QuantizeMultiplier(), nnfw::cker::DepthwiseConvParams::stride_height, nnfw::cker::DepthwiseConvParams::stride_width, nnfw::cker::DepthwiseConvParams::weights_offset, and nnfw::cker::PaddingValues::width.

Referenced by run().

◆ run()

|

overridevirtual |

Implements onert::exec::IFunction.

Definition at line 343 of file DepthwiseConv2DLayer.cc.

References _input, _kernel, convFloat32(), convQ8i(), convQ8iHybridPerChannel(), convQ8uPerChannel(), convQ8uPerTensor(), onert::backend::IPortableTensor::data_scales(), and onert::backend::IPortableTensor::data_type().

Referenced by onert::backend::train::ops::DepthwiseConvolutionLayer::forward().

Field Documentation

◆ _activation

|

protected |

Definition at line 78 of file DepthwiseConv2DLayer.h.

Referenced by configure(), convFloat32(), convQ8i(), convQ8iHybridPerChannel(), convQ8uPerChannel(), and convQ8uPerTensor().

◆ _bias

|

protected |

Definition at line 62 of file DepthwiseConv2DLayer.h.

Referenced by configure(), convFloat32(), convQ8i(), convQ8iHybridPerChannel(), convQ8uPerChannel(), and convQ8uPerTensor().

◆ _dilationHeight

|

protected |

Definition at line 76 of file DepthwiseConv2DLayer.h.

Referenced by configure(), onert::backend::train::ops::DepthwiseConvolutionLayer::configureBackward(), convFloat32(), convQ8i(), convQ8iHybridPerChannel(), convQ8uPerChannel(), and convQ8uPerTensor().

◆ _dilationWidth

|

protected |

Definition at line 75 of file DepthwiseConv2DLayer.h.

Referenced by configure(), onert::backend::train::ops::DepthwiseConvolutionLayer::configureBackward(), convFloat32(), convQ8i(), convQ8iHybridPerChannel(), convQ8uPerChannel(), and convQ8uPerTensor().

◆ _input

|

protected |

Definition at line 60 of file DepthwiseConv2DLayer.h.

Referenced by onert::backend::train::ops::DepthwiseConvolutionLayer::backward(), configure(), convFloat32(), convQ8i(), convQ8iHybridPerChannel(), convQ8uPerChannel(), convQ8uPerTensor(), and run().

◆ _kernel

|

protected |

Definition at line 61 of file DepthwiseConv2DLayer.h.

Referenced by configure(), onert::backend::train::ops::DepthwiseConvolutionLayer::configureBackward(), convFloat32(), convQ8i(), convQ8iHybridPerChannel(), convQ8uPerChannel(), convQ8uPerTensor(), and run().

◆ _multiplier

|

protected |

Definition at line 73 of file DepthwiseConv2DLayer.h.

Referenced by configure(), convFloat32(), convQ8i(), convQ8iHybridPerChannel(), convQ8uPerChannel(), and convQ8uPerTensor().

◆ _output

|

protected |

Definition at line 63 of file DepthwiseConv2DLayer.h.

Referenced by configure(), convFloat32(), convQ8i(), convQ8iHybridPerChannel(), convQ8uPerChannel(), and convQ8uPerTensor().

◆ _paddingBottom

|

protected |

◆ _paddingLeft

|

protected |

Definition at line 65 of file DepthwiseConv2DLayer.h.

Referenced by configure(), convFloat32(), convQ8i(), convQ8iHybridPerChannel(), convQ8uPerChannel(), and convQ8uPerTensor().

◆ _paddingRight

|

protected |

◆ _paddingTop

|

protected |

Definition at line 66 of file DepthwiseConv2DLayer.h.

Referenced by configure(), convFloat32(), convQ8i(), convQ8iHybridPerChannel(), convQ8uPerChannel(), and convQ8uPerTensor().

◆ _strideHeight

|

protected |

Definition at line 71 of file DepthwiseConv2DLayer.h.

Referenced by configure(), convFloat32(), convQ8i(), convQ8iHybridPerChannel(), convQ8uPerChannel(), and convQ8uPerTensor().

◆ _strideWidth

|

protected |

Definition at line 70 of file DepthwiseConv2DLayer.h.

Referenced by configure(), convFloat32(), convQ8i(), convQ8iHybridPerChannel(), convQ8uPerChannel(), and convQ8uPerTensor().

The documentation for this class was generated from the following files:

- runtime/onert/backend/cpu/ops/DepthwiseConv2DLayer.h

- runtime/onert/backend/cpu/ops/DepthwiseConv2DLayer.cc